Archive for the ‘SOA’ Category

Troux 2011: Up Leveling Your EA Practice, Program by Program

Troux 2011: Up Leveling Your EA Practice, Program by Program

This was a continuation of the previous panel discussion. Speakers were:

Sean Dwyer, Northwestern Mutual

Jennifer Pfaff, Jacobs Engineering

J-P Renaud, Jacobs Engineering

Eivind Nilson, Liberty Mutual

Moderator: Chuck Keffer, Troux

Q: Where are you in rolling out your current effort?

Sean: They are trying to roll out the ability to do assessments of infrastructure, roadmaps, and then send out a system feed to the governance organization. Their goal is to only have to enter information in one place.

J-P: They are rolling out Application Optimization right now, then Alignment, then Standardization. A lot of customers at the conference do Standardization first, others indicated Alignment might be the best place to start, but for them, Optimization gave them the immediate tangible benefits they needed and put in their business case.

Eivind: They also started with Optimization and a bit of Alignment. The questions they were trying to answer led us to those two modules as opposed to Standards. They went through the data gathering process and have pretty much completed it, however there are always new things they are finding. They are about to roll out a workshop where they bring stewards into the conversation not just as the data entry point, but as data consumers in their decision making process. They are establishing an advisory board with IT and business representation.

Q: Change management is a key part of your strategy. Can you talk about a few of the most important considerations underlying your approach?

Sean: This is a lifestyle change. If you don’t embrace this and get people to feel good about it, you’re dead. They had a change management communications lead assigned to the project that assisted them in their effort. They focus on baby steps, going for the single rather than the grand slam. They also chose not to start with Alignment. If you screw up within IT, people understand what you were trying to do and are more forgiving. If you screw up with your partners/sponsors that don’t have that solid understanding, the risks are greater.

Q: What concrete steps are you taking to keep the data current, correct, and complete?

Jennifer: They are adding more data stewards, having a first governance meeting soon, and establishing the processes.

J-P: They are taking a hierarchical approach to their data steward for more effective management and allowing individuals to only have a small set of data for which they are responsible. People also have a vested interest in keeping the data current.

Q: What are the target accomplishments that you are working toward?

Eivind: They are trying to speak in terms that are meaningful to their stakeholders. They positioned their data around some simple ideas. First, a summary view of the applications that are target versus non-target for rationalization, including which ones have a specific retirement date. The ones that don’t add up to some amount of dollars. This was the potential savings opportunity by IT owner. They took the same data by business owner to show the financial opportunity available. This seems to resonate very well with the people who have seen it. They also have a report that shows planned retirement by quarter. If there’s not much from your area, someone will ask why. They are documenting the dependencies and the reasoning behind their determination of target versus non-target.

Q: In launching your initiatives, did you leverage any EA framework?

Sean: No. It was more of a cultural thing.

J-P and Jennifer: No, but they did read Enterprise Architecture as Strategy and started there.

Eivind: They did use a standard business capability model for our industry, but tailored some of the language to make it specific to their company.

Q: Did you leverage any tools like stakeholder maps to obtain buy-in?

Sean: In the roadmapping effort they reached out to department heads and IT leaders and asked them what roadmap meant to them and what value they would get out of it.

Eivind: They just tried to make it as simple as possible.

Q: How does one measure success of an EA initiative and do it in a repeatable way?

J-P: Success was when we hit the ROI goal in the business case. Once past that, everything becomes easier. They are focused on that initial program and the ROI associated with it.

Sean: They have three year plans and divisional metrics. They measure how much they save through cost avoidance and reuse.

Eivind: They measure how many applications have been retired. They are still identifying targets and getting them on a roadmap.

Troux 2011: Building the Business Case for EA

Troux 2011: Building the Business Case for EA

This was a panel discussion, consisting of:

Sean Dwyer, Northwestern Mutual

Jennifer Pfaff, Jacobs Engineering

J-P Renaud, Jacobs Engineering

Eivind Nilson, Liberty Mutual

Moderator: Chuck Keffer, Troux

Context: Today’s reality for the global 2000 CIO. The average tenure is 18 months. They need to get started fast and work fast. They face shrinking budgets, rising expectations, and a big focus on alignment with the business.

The average annual sales of a global 2000 company is around $15 billion, and on average, 4% of that goes to IT, yielding $600 million for discretionary spend. Unfortunately, 80% of that may be spent on keeping the lights on, and another 80% is already allocated to business driven IT efforts, leaving $24 million or less for the CIO that is truly discretionary.

Chuck then went into Troux’s question driven approach for driving business value out of EA. It’s focused on the questions that EA stakeholders are asking, and the decisions they need to make.

Q: What were the top three factors and/or considerations in building the business case for your 2011/2012 initiatives?

Sean: In 2010, they did a lot of pilot work in the APM space. They did some charts and graphs to show that they could add value. He built the “mother of all spreadsheets” to support this pilot work whose support could have been a full-time job. They made the decision to pursue tooling to support this, rather than the “Microsoft” stack. They then tried to align themselves with the IT business strategy, such as “simplifying the business technology environment.” It was going to be difficult to this without a robust portfolio management system.

Jennifer: They had a number of objectives. They wanted to maintain sustainability, improve business alignment, improve the IT planning and systems responsiveness. On sustainability, they wanted to avoid inventing the wheel and creating processes that would increase the amount of work they had to do. They wanted to simplify things.

J-P: He re-emphasized the sustainability point. They wanted to “operationalize” the data gathering exercise. There’s a big effort that goes into the initial data population and they wanted to make sure they had processes to keep it up to date without adding huge effort to the organization. They tried to rely on places where the information was already being entered, rather than adding a new step to existing processes.

Eiving: First, they needed a practice around application portfolio rationalization, and then had to do the rationalization itself. The insurance industry doesn’t have a tangible product, it’s a promise. Their systems are the products, then. Through acquisitions, it is painful for the business to have their products in multiple suites of insurance systems. Rationalization had to happen. They are also highly regulated at the state level and a significant effort is required to maintain compliance on every one of the systems. This impacts their agility. Second, once the team was formed, they looking into tooling, including sustainability, operationalization, and business alignment. They wanted to be able to engage the business in the problem solving in defining what the target state was.

Q: Who did you have to convince to sponsor the effort? What were their motivations?

Sean: They made a difference between commitment and motivation. They had to work with the CIO and the IT division leaders. When they did these things, it was a lifestyle change for IT. It breaks down silos and you need communication and org change to support it. They took a baby step approach, not going enterprise wide. They focused on supporting IT first, before ever going out to the business.

Q: What role did ROI play in the business case?

J-P: ROI was the business case. They don’t sell a product, they sell man-hours. Our profit margin is on the order of supermarkets. They got the ROI specifically on the application optimization piece. It was the easiest one to get hard dollars out of quickly. EA is hard to define, we used the ROI calculator, cut the value in half twice due to company conservative culture, and kept doing this until they had a value that they were comfortable making a commitment to.

Jennifer: Work with the culture in your company. You may not have to cut down the numbers as much as we did. They used Forrester data to help support their case, and ultimately, it was easy to stand behind.

Q: What was the biggest challenge you faced in building/communicating the business case?

Eivind: They felt it was important to get stewardship of the data close to the IT owners. They had to show the value to those people because they would have to commit resources to do the stewardship function. They partnered with one of those organizations and did a proof on concept with a subset of that organizations portfolio and demonstrated the reporting capabilities. That gave them a champion. It is an ongoing thing to keep that commitment, those groups have other priorities that don’t go away.

Q: How much was the business case for technology a part of your business case and what was the underlying argument for it?

Sean: Technology was a big part of it. If they wanted to have better capabilities and enhance our agility, they needed better tooling. If they hadn’t done the pilot work, however, they would not have been successful. Those pilots and POCs generated a lot of good will.

Q: What techniques did you employ in communication the business case?

J-P: They did not have to sell EA. They had a CIO come in that had previous experience with EA, who kicked off the effort to establish the practice, spending a year figuring out what it needed to be. They reached a point with EVP (Excel/Visio/Powerpoint). They generated a list of things they can do and the frequency they could have updated it with their availability with the EVP approach, and then talked about what they could provide with better tooling and did the build versus buy analysis.

Q: How involved were the beneficiaries/stakeholders in making the business case?

Eivind: He mentioned their POC and the use of the “champion” to help establish credibility. They also reached out the corporate business planning and strategy group, and got representation from them in the effort. This went a long ways to helping things out.

Troux 2011: Great Expectations: Ready to Move the Dial in the New IT Landscape

Troux 2011: Great Expectations: Ready to Move the Dial in the New IT Landscape

This presentation was given by Angela Yochem, a Business Information Executive with Dell.

Some trends:

- Reduction in size of IT.

- Move from centralized, global IT to a more decentralized model.

Successful response to these trends require a strong, empowered EA team.

Many companies are going through business transformations, yet we are still in a state where EA and IT are not involved early in the conversations. She queried the audience, and only and handful of people said they were involved from the beginning.

More trends mentioned by Angela include creative bundling and/or packaging of solutions for best value offering to customers, along with new offerings, new pricing models, and new channels. In the discussion around this, she suggested an intellectual exercise for use on April 1. Come up with a business transformation scenario that is just barely within the realm of possibility (or not, she suggested “what if a bank acquired an airline?”) and see how your EA team would respond. Do you have the information you need to respond properly to business change?

Next trend: Increased reliance on technology to enable business transformation. What would it take if your company needed to shift from selling its products to renting your products?

“We must be able to shift with changing/emerging business models.” These intellectual exercises are important for maintaining readiness, because this change is occurring all around us. “Now is the time to prepare – but most IT shops are not in a position to start.” Angela brought up the oft-quoted numbers of how much of IT spend is focused on keeping the lights on instead of new investments.

“Simplifying/globalizing processes are key to reducing IT complexity and increasing agility.”

“Cultural shifts – break reliance on f2f interaction, SMEs, additional funding, and the notion of ownership.”

Invest in business accelerating capabilities. Techniques to do this include developing expertise in business architecture capabilities; investing in acceleration teams, also known as innovation teams or SWAT teams (5-10 people that are funded out of baseline/overhead and find ways to accelerate time to market); and having strong toolsets. Knowledge is power.

Lastly, actively manage your own capabilities and career as you do your IT shop. She gave the analogy of the flight attendant instructions to put on your own oxygen mask before helping those around you. Make sure you are taking care of your own personal capabilities and the opportunities for you on an individual basis.

Architecture Fit and Fitness

Architecture Fit and Fitness

In discussing how enterprise architecture supports merger, acquisition, partnership, and other evaluation opportunities with some attendees at the Troux 2011 conference this morning, I used two terms: fit and fitness.

Fit is how well things align between the two companies/products. for example, what do the capability maps look like? Did things get sliced up in a similar manner, or are they completely different? How does each company map their technology into the capability map? if the capability map is similar and the mapping of technology to capabilities is similar, then this is probably a good fit.

Fitness is the state of the things being integrated or merged. You can have a great fit, but if it is built on outdated technology, or has a poor architecture, the fitness is poor, and thus there is more risk in pursuing the activity.

What do you think?

Troux 2011: Industry Perspective: Great Performances

Troux 2011: Industry Perspective: Great Performances

Moderator: George Paras, A&G Editor-in-chief

Panelists: Mike J. Walker, Principal Architect with Microsoft

Aleks Buterman, Founder, SenseAgility

Tim Westbrock, Managing Director EAdirections

Paul Preiss, CEO, IASA

This was a certainly a lively panel, and I don’t envy George’s task of trying to keep it on track. Here’s what I captured.

Q: What does top performing mean to you?

Mike: High performance for EA is really about having a business conversation to maximize and amplify business results.

Aleks: The ability to quantify value in such a way that stakeholders believe.

Tim: Top performing groups figure out the things they need to do in their organization, whatever it may be, but the common thing is to become more proactive and less reactive.

Paul: There is no such thing as a top performing enterprise architecture team, there is only top performing architecture teams. We can’t leave out the other 90% of architects in the profession. For them, it is the ability of them to claim revenue or shareholder value, owning technology value to the company.

Aleks: Herding architects, especially enterprise architects, is a very difficult thing to do.

Mike: We’ve done a poor job in really defining what architecture is. For Enterprise Architects, is that the right brand? I don’t think it is.

Paul: We care about all of the architects. Enterprise architects account for about 10% of the architects out there. There’s a reason that the term “ivory tower” is associated with Enterprise Architect, because the terms don’t address what 90% of the people do.

Mike: Fundamental issue: we’ve mixed enterprise architecture with IT architecture. Enterprise architecture is business driven and a peer to the CIO whereas technology architecture exists solely within IT.

Aleks: Sustainable innovation is driven by integration across several domains, and that’s what enterprise architecture is about.

Tim: The primary audience is left out in too many organizations. If you’re doing Enterprise Architecture, your audience should be the leaders of the enterprise. Instead, we find our time spent with the domain or solution architect with a mythical translation of the business strategy without ever having validated it with that business leadership. Organizations that do EA the best are actively engaged with the business leadership.

Q: Where should the EA organization report? Does it matter where they report? Should it be centralized or federated?

Paul: Did a survey, and about 95% of the room report in through CIO, only about 7 people in the room report outside of the IT organization. This is a non-entity question. Professions get one recognized value proposition.

Tim: I don’t believe that who you report to and who your audience is are the same thing. Most people report in through IT because that’s where it started. We’re one of the few organizations that deal with things enterprise wide. We’ve been saying that we should be aligned with the business for 15 years. We all gravitate toward the comfortable. Most of us came from the project role or domain role. When it gets hard where we can make a difference by going outside of that mold, the tendency is to retreat to what is comfortable. We need to get out of our comfort zone and go talk to business activities and figure out what we can do to help them, rather than what we can do to help projects work better.

Mike: On certifications: no one is getting sued over a bad software development project (Paul disagreed, says IASA is working on some such lawsuits). All up, there is a lack of accountability within IT, we have not done a good job of being credible with the business. If 80% of all IT projects fail, have we earned the trust of our audience? We haven’t earned a seat at the table, and we need to do so through incremental benefits.

Paul: We simply haven’t claimed a seat at the table. For example, we built a website for online retail and made a company $100 million. Marketing claimed success, and got their budget increased. We need to claim the value we deliver.

Q: How do we quantify top EA performance?

Aleks: when we are dealing with large scale environments, you can’t claim a seat, you have to be invited. If you don’t toot your own horn, no one else will. The way to do so is through metrics that make sense to the business leaders. You may not have a P&L, so you need to have partners that bring you into the discussion. You quantify the value to stake claim to your seat at the table and get invited in.

Tim: I don’t think you can quantify the value of enterprise architecture. We need to get much better at quantifying the impact the EA makes on the decisions that are made and the investments that are made. EA, by itself, has no real quantifiable value. We need to quantify the value of our impact.

Aleks: Should we be called investment managers instead of enterprise architects?

Mike: We haven’t talked about the soft skills required to be an enterprise architect.

Paul: IASA states the fundamental value of architects is the ownership of technology and its value. Technology investment is a fundamental driver for profitability in today’s marketplace, yet we continually treat it as a cost center. 60% of capital expenditures is technology, yet it is the only set that doesn’t have a robust set of NPV, etc. at the shareholder level.

Q: What pointed advice would you give the audience to get to where they need to be?

Q: Back to where EA should report…

Aleks: EA should report to the board of directors

Tim: Separation of responsibilities where business and information architecture report into the business, and application, technology/infrastructure, and data architecture report in through CIO.

Mike: Agree, but we need to look at the maturity of the organization throughout. Each industry has different principle drivers that influence the organizational structure and how you implement your architecture team. If you are a mid-sized bank, do you invest in a federated EA approach? Perhaps not, we have to be pragmatic and morph to the organization. We need a set of patterns that can be applied where they work.

Aleks: We have a guide called Enterprise Architecture as Strategy (Jeanne Ross book). If you have a diversified operational model, there are severe constraints on how much value you can add. Depending on what your operational model is, it will influence on how you structure EA.

Paul: Reporting doesn’t have any impact on the profession. We’re going to find it really hard to change the perceived value for our profession which is technology strategy. There are very few architects invited to talk about the sales process, except to make a model and understand the technology impact.

Back to how we can get there…

Tim: A lot of modeling that goes on is very bounded and implementation oriented. There are models that can’t get done within project/budgeted work. It’s part of the overhead for enterprise architecture. Do you have these models? If the answer is no, or some, then go create them. It’s a communication device that allows us to have the conversation. It the places where it happens, the communication tools need to be established so the EA can talk with the CEO. The CEO isn’t going to go to the CIO and ask to see the Enterprise Architecture guy.

Mike: All people are different and have different motivations. Our key to success depends on our ability to effectively communicate our value prop and what’s best for the organization to all of those stakeholders.

Aleks: You need to know the constraints you’re operating under according to the operating model, and you need to understand if you have an evidence-based management culture. If you don’t have an evidence based culture, it will be more challenging.

Tim: My experience has been that the models we create are nowhere near evidentiary. They tell a story and get people who have focused on making a silo as efficient as it can see how their decisions have impact the entire organization. It’s a hard conversation to have without visuals.

Paul: People have come up to me and said I get asked about SDLC problems, and we aren’t even doing architecture. Architects need to have skills in human dynamics, this is where we see architecture teams weakest. Second is business technology strategy, talking about business value and how do we make more money. Architects get tripped up on focusing on project success which is measured wrong. It’s not about done on time and on budget, it’s about the value it delivers.

Final comments:

Aleks: Think about the environment you’re in, just because something worked it one situation doesn’t mean that it will work in another. Find out what the stakeholders need to hear, and deliver that, rather than espousing the gut of EA.

Mike: Change our mindset, shifting from IQ to EQ focus. Think about the method of delivery, be action oriented and pragmatic. Finally, we have to always be about value.

Paul: Connect the architect team. Get them all on the same team understanding that we’re doing the same thing. Connect them on a skill level.

Tim: Spend time reading non-technical literature that is relevant to your business.

Troux 2011: EA, ITSM, PPM, and CMDB Acronym Soup

Troux 2011: EA, ITSM, PPM, and CMDB Acronym Soup

This was a panel discussion with representatives from Forrester (I believe), Troux, HP, and CA. The text below is my attempt to capture the points made by the speakers, and do not necessarily constitute my own opinions on these subjects.

I didn’t capture the first couple of questions, but here’s a couple important points that I heard.

Don’t call things compliance reviews, call it a business capability review. As part of that response, the speaker also said that we want enterprise architecture to be driving change, identifying the things that need to be done, and then get feedback from the PPM and systems management tools to make sure that the intended business benefits are being delivered.

On IT planning… it is frequently a shot in the dark in a reactive mode to the business strategy because the planners lack the information necessary to have a well-aligned plan.

From this point on, I tried to capture the question and the panelist responses.

Q: How does EA joined with the ITIL/ITSM space deliver better business value?

CMDB provides much more granular information about assets, such as incidents, changes, etc. The combination of this operational data plus the data contained in an EA repository like Troux has the potential to create even greater value and decision support. At the same time, throwing a bunch of ingredients together doesn’t get you a cake. You need to have a good cook.

Q: How do you see the CMDB and the cloud working together?

Discovery, topology visualization, impact analysis are all still important things. Wherever the owner of that infrastructure is, there must be ITSM, and a CMDB. Trend in CMDB is toward real-time information.

Q: The goal is to establish PPM, ITSM, CMDB, and operationalized EA. What would my 18 month roadmap look like, or where do I start?

Forrester: Personal opinion is to start with the PPM process. How do we evaluate an investment, what kind of stages does it go through, what information do we capture about the investment? Then, now that the project is complete and we have an application, how are we tracking its business value, its alignment. Then, with a CMDB, what’s going on beneath the covers in the operation of that application.

Q: This question was on the relationship between business services in ITIL and the business capabilities in Troux.

The response was that this was a very symbiotic relationship, allowing business context to be brought into the operational side. Troux allows us to establish relationships all the way back up to a business capability, which may be very important to a change management coordinator.

Q: Understanding that CIOs are striving to integrate IT in the business, how successful can a CIO be with limited to no control over the IT budget?

The first panelist lamented at the whole notion of “integrating” IT into the business. IT is part of the business, period. The lack of governance mechanisms that allow us to make a correlation between all assets in the organization and the business constituents it supports has put IT in this position. Another panelist pointed out that companies that are stuck in the “utility provider” archetype really struggle to ever get out of that role and into a trusted provider or partner/player type of relationship.

Q: Oracle takes a very fast approach to EA, incumbents take a more managed approach. Is one better than the other?

So many EA teams try and fail. We have our own framework for fast-tracking EA, but it is quick and dirty. Taking your time, in general, sounds better.

Bill Cason of Troux added that if you don’t build sustainable processes, you haven’t added any value. A big part of EA’s job is to get something sustainable, regardless of how fast you build it. Management doesn’t have a lot of patience for EA today.

Q: How can software deployment integrate with EA, PPM, ITSM, and CMDB? Can it be really integrated?

The most interesting answer from the panelists was the one who said, “How can we do this without integrating them?” and went on to talk about the relationships between PPM and SDLC. Full blown PPM also goes back three years after the release and verifies that we actually received the business benefits we said we would back when the project was approved.

Troux 2011: A Step-by-Step Approach to Building EA Value at AMD

Troux 2011: A Step-by-Step Approach to Building EA Value at AMD

Tannia Dobbins, IT Relationship Manager from Advanced Micro Devices, gave this presentation.

She walked through some of the key drivers behind enterprise architecture over the past 5 years at AMD, initially focused on supporting acquisitions and divestitures, moving into reducing complexity and risk when the economy downturn occurred, then to process efficiencies and business enablement, and now into core architecture and design.

A constant theme throughout her presentation was that they had strong backing from their senior leadership / stakeholders. An interesting fact, though, was there were four different CIO changes during this timeframe, yet they consistently maintaining the backing that they needed. When a new CIO came in, they had the inventory of assets ready to go with the ability to slice and dice it in various ways to meet the needs of their stakeholders.

One of the things that I really liked about Tannia’s presentation was their use of targeted landing pages into the Troux portal, and even more so, their use of scorecards in their monthly operations review. Two particular scorecards she showed were a technology scorecard and an application portfolio scorecard. Both of these contained information for all of the different ownership areas on one chart, red/yellow/green presentation (e.g. % of things compliant with standards, % of completeness of information, % of assets on “risky” technology, etc.). By putting all of the areas/VPs on the chart, it also encourages compliance through a bit of peer pressure. Everyone can see who is doing well and who isn’t.

Summary points:

- It is a journey, not a destination. Take time to understand the changing dynamics and needs of the organization.

- It takes more than just management commitment, but this helps!

- The power of a few, good evangelists can ignite a grass roots effort.

- It is all about solving business problems (and financial ones too).

- Changes in leadership can be a catalyst for a new level of maturity.

- Changes in IT maturity are opportunities to leverage Troux capabilities.

Troux 2011: Let’s Talk About Results

Troux 2011: Let’s Talk About Results

In this session, David Hood, CEO of Troux, talked about Troux’s approach to the market. He began by presenting “Zucca’s Equations”:

W2A: Where we are

W3TG: Where we want to go

HW(GT)2: How are we going to get there

He called out that the need for EA is increasing at a rate much higher than the maturity of the EA discipline, creating a gap. To fill that gap, he saw 4 keys to success:

- Start with the end in mind

- Make it repeatable

- Make it easy to consume

- Some parenting is required

In the section on censurability, David talked about visualizations, citing both the Facebook connections visualization (as an example of a beautiful visualization) and the Billion dollar-o-gram (as an example of a disruptive visualization). Visualizations of the information are important to the practice of Enterprise Architecture.

He ended with a slide containing a picture of Mary Poppins, indicating that sometimes a spoonful of sugar will be necessary to make the medicine go down. I think this goes hand-in-hand with making things consumable. Create a consumable version (sugar) of the information to get the bring light to the important information necessary (medicine) for our decisions that may have been an unknown item before.

Troux 2011: Investing for the Future through Strategy and Architecture at Cisco Systems

Troux 2011: Investing for the Future through Strategy and Architecture at Cisco Systems

Imran Qayyum, an EA for Cisco, was the presenter for this session. They used the Proact BOST framework (Business Operations Systems and Technology) to get started, providing an industry specific reference model.

Imran’s team is responsible for development services, which covers all sorts of software development technologies necessary for the Cisco Engineering teams to design and build cool stuff. They gave a good demonstration of how they are using Troux, walking through how they’ve defined their domain in terms of capabilities and business functions delivered to their customers, including roadmaps for the technologies and applications that support each business function.

In his wrap-up, he mentioned that they will be looking into integration with PPM and CMDB technologies. I believe that as EA tools increasingly move toward decision support, integration among these different systems will become increasingly important, especially ones that provide financial information, since that’s a huge component of decision making for business leaders.

Troux 2011: Technology-Enabled Health Care

Troux 2011: Technology-Enabled Health Care

Sandra McCoy, Executive Director for Enterprise Architecture with Kaiser Permanente, gave this talk, subtitled as “Architecting Standardization in a Complex Healthcare Organization.”

She mentioned that the challenges her team faces are that architects would like to work in a nice orderly fashion, but the environment never allows for that. It’s an uphill battle, with blind curves, treacherous consequences, insufficient resources, etc.

They chose to start with standards, focused on defining where they wanted to go, and less so on defining where they currently are. Standards must be clearly visible and easy to find. Their concepts must be easily understood, and they must be an enabler. They need to be marketed as an enabler and a safeguard.

One interesting anecdote in the discussion was that they initially created a big excel spreadsheet with their standards and got challenged by stakeholders to do something more innovative/cutting edge. It’s a great example that we’re always marketing ourselves in everything we do.

She reinforced the earlier points from Bill Cason and Warren Ritchie that we have to be able to describe our assets and resource to demonstrably show dependencies and impacts to business leaders as part of the decision making process.

In the Q&A portion, one person had caught that she had a box labeled “Innovation Standards.” This is the category for technologies that are under investigation that they wanted to track, including the results, to avoid having a bunch of people looking at the same thing in two or more different areas, or to also make the results of prior investigations available to others.

Her lessons learned were:

- Go slow to go fast: plan your approach (before you start talking to people), architect the 5 year vision, and create templates, messaging, RACIs and roaadmaps.

- Don’t market what you can’t support: Their repository has sold itself, people want the results but not the work (organization needed to participate to provide data for the repository), and create a collaborative approach.

- Don’t forget to practice what you preach: Architect first, ensure the EA tools and stack are standards, and architect a comprehensive enterprise architecture solution.

Troux 2011: Strategy Management Through Enterprise Architecture

Troux 2011: Strategy Management Through Enterprise Architecture

Warren Ritchie, CIO for Volkswagen Group of America, presented this session and told us about his personal journey with EA, its utility in a globalization concept, and how the EA program at VW Group of America provided initial benefits. He believes that EA is the missing piece in strategy management.

In his PhD dissertation (he has a PhD in Business Strategy), he looked for a more compelling explanation of why firms do or do not take advantage of a strategic opportunity: strategic choice or structural inertia? He found that small companies with a simple structure moved fast, medium size organizations (complex single business) were the slowest, and large, multi-business firms moved fast (possibly via a spinoff). The research pointed toward structural inertia being a key factor in whether or not a company took advantage of an opportunity.

So, if strategy implementation is about manipulating the structure, we cannot manage internal resources, products, and services as if they are independent things. If you can’t describe internal resource structure, you can’t implement strategy.

He went on the discuss VW’s strategy around sales and marketing globalization. I thought it was great that his diagram clearly showed areas for horizontal integration versus vertical integration. He talked about the notion of a modular platform for building cars, and how it was flexible and highly scalable. We need to do the same thing for organizations. Create a global core that can be customized for the particular regions. 70% of processes are global, 30% are local.

On speed to benefits, he suggested getting started with a specific “failure is not an option” project. Points on his slide were:

- Layout the EA framework

- Populate the repository as a trailing edge activity early

- Re-Use repository content for later phases

- First efforts are labor intensive, but returns start quickly

The project they targeted was a transition in their application management service supplier. A big step was that they took the tacit knowledge that was known in the incumbent supplier, and made that information explicit in an EA repository. They are now faster with projects because they know what things connect to each other, and by being faster, projects now cost less. The EA practice is now in high demand within the IT department and starting with the business units. The ROI they’ve achieved is well over 100% on risk mitigation alone. They are in a position where they can go to suppliers with their model, and those suppliers map to it.

The overall conclusions were:

- Organizations that are better able to describe themselves are more adaptive to market opportunity.

- Globalization strategy of sales and marketing requires a rigorous description of organization’s internal structure.

- After an initial investment in EA, each incremental investment is resulting in disproportionately positive returns.

Troux 2011: How to Develop an IT Strategy to Accelerate Business Goals

Troux 2011: How to Develop an IT Strategy to Accelerate Business Goals

The first session I’m in is one of the few sessions by Troux staff at the conference, being given by Bill Cason, CTO of Troux. Always nice to see a CTO doing these things.

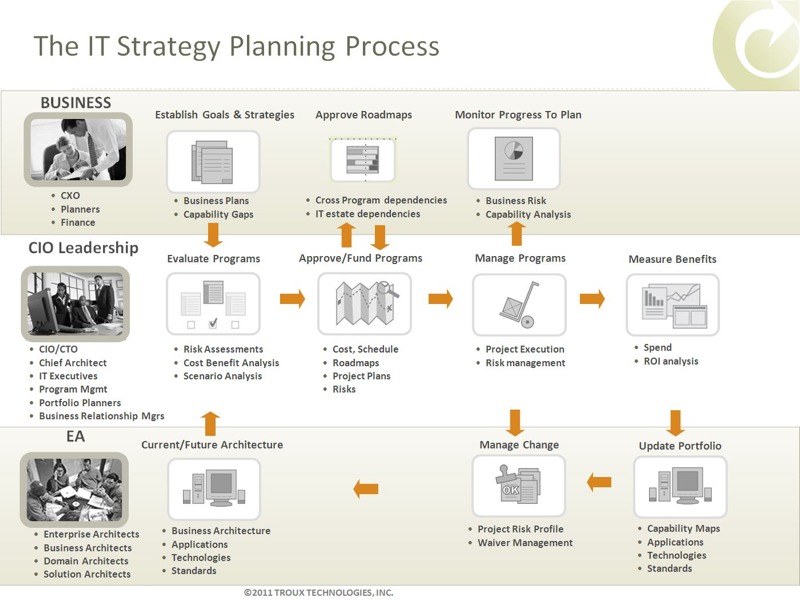

Bill went over the IT strategy planning process, involving the business, the office of the CIO, and enterprise architecture. There was a good slide that visually showed the process as Troux sees it that I’ll try to get and repost here. Update: here’s the slide:

Early on, he emphasized the need to capture the business context in the forms of goals and strategies, as well as to have EA be the keeper of business capability maps, defined through collaboration with the business. These capability maps are what allow for fact-based conversations that aid the decision making process.

Bill’s Top 10 things to do:

- Help your boss. Their surveys show that most CIOs are managing strategy on their own.

- Establish sustainable processes. Get sponsorship, establish stewardship, define all roles and establish them, and get agreement on governance and measures.

- Involve the business

- Understand how organizations think. IT thinks about IT assets and activities, the business thinks about business capabilities. The capability map must align the IT portfolio with the business capabilities.

- Recognize what tools they use. Business has lots of manual methods, IT has some automation.

- Establish business context. If you can’t involve the business, at least try to understand their objectives.

- Start with the business questions. What does the business want to do? Where should it transform? How are the transformations progressing?

- Focus on key areas. “Burning platforms” M&A, divestitures, regulatory drivers, time to market. This helps focus the work and make it more digestible. Near time focus, grow scope over time.

- Influence departmental behavior. Link business roadmaps to IT roadmaps, communicate the dependencies and impacts.

- Be flexible. Executives will never use EA models.

The Empowered Business

The Empowered Business

Alex Cullen of Forrester posted an interesting blog regarding the “inevitable trend” of business empowerment. At the recent Forrester EA Forum, they invited attendees to rate on a 1-5 scale, whether or not “the EA function has close ties with business management” and whether or not the “technology strategy and standards allow for rapidly changing technologies.” Not surprisingly, in my opinion, only 33% of respondents answered positively on the first question, and only 27% answered positively on the second question.

The combination of these two questions is very interesting. I’m very pragmatic when it comes to discussions about standards. Arbitrary standards may allow someone to fill out a compliance dashboard or meet their personal or team objectives, but the majority of those standards may not have any positive impact on the company’s strategy and goals, and in fact, may be an inhibitor. At the same time, this same linkage to strategy and goals must also apply to the use of new technologies. Someone needs to be the enterprise parent that asks the question, “do you really need that?” It may be a shiny new thing, but does it make a difference in the ability to accomplish the strategy and goals?

This is by no means an easy problem. Yes, sometimes there are very clear cost-cutting initiatives that make it easy to drive certain standards decisions. Sometimes, things are not so clear. For example, I have had conversations with people in the financial services industry who told me that the technology available to the financial consultants was important for recruiting. A company with slow technology adoption processes could be at risk of losing their top consultants, or failing to attract new ones, which winds up having a direct impact on company revenues.

The end result of all of this is that work on these two items must go hand in hand. You can’t hope to establish standards in the right areas if you don’t have an intimate knowledge of the business strategy and goals. You can’t have that intimate knowledge unless you have strong ties with business management. If the enterprise architecture team does not have these strong ties, they’re going to have to balance second-hand explanations against the potentially disconnected goals of the department to which they report (e.g. IT).

The right model for EA has to be that of the trusted advisor. I’ve commented about this previously in “Enterprise Architect: Advisor versus Gatekeeper” and “IT Needs To Be More Advisory”. When EA is being grown from within IT, a big challenge may be to establish that trust. Like it or not, the EA team may be carrying the baggage of the entire IT department with you. Trust is earned, so we need to find a way to establish one or more strong relationships with key business leaders, and then use those relationships to scale from there.

Effective IT Governance

Effective IT Governance

On Twitter, Scott Ambler (@scottwambler) posted:

Effective IT governance is based on motivation and enablement, not command and control.

At first glance, it would be hard to argue with this statement. In general, most people don’t like command and control, and who wouldn’t prefer the carrot over the stick? Having thought a lot about governance (and written a lot, too hint, hint) I had to go deeper on what effective governance really entails. I’ve seen situations where a command and control governing style has succeeded and ones where it has failed. I’ve seen the same thing for motivation and enablement styles, as well. So what really is the key?

In situations where things turned out good for the company, it was because the organization, as a whole, all saw things in the same way. They understood the strategic priorities and goals and balanced these against departmental or project priorities goals in an appropriate way. Where things turned out bad is where those priorities and goals were not well understood, if they even existed at all. In other words, everyone had their own opinion on what the right thing to do was. In general, people always had good intentions with the decisions that they made, but the criteria they decided to use to choose the best approach was not consistent from person to person or team to team. In the absence of this understanding, neither command and control or motivation and enablement will fix things. Put in a bunch of commanders that don’t have a shared understanding, and you get a power struggle. Likewise, if we simply try to remove barriers and “enable” people, that is not going to help when accomplishing goals involves cross-project or cross-organizational efforts.

To have effective governance, you must first have clarity and a shared understanding of the goals and strategy around your efforts. If you don’t have this, then you may need to begin with a heavier command and control approach to not only get the word out, but to ensure that it sinks in. Once you build that shared understanding, a shift to a focus on enablement is certainly in order. If the understanding has taken root, people don’t need to be controlled anymore, and that should be your goal. Along with this, however, your people must be able to recognize when things fall into the grey area. As part of being enabled, there needs to be a trust factor that people will make those grey areas known to the command structure, even perhaps with options that look at things from both a micro and macro level. The command structure, in turn, must make decisions in an efficient manner, and then work its communication processes to augment the shared understanding in the organization.

If I had to put effective IT governance in a nutshell, it’s all about communication. If you communication is great, which means that you effectively communicate not just the direction but the reasons behind it, and you have a feedback process for discussing it with people who may disagree with it, you’re likely to have effective governance.

Make 2011 the Year of the Event

Make 2011 the Year of the Event

In this, my first blog post of 2011, I’d like to issue a challenge to the blogosphere to make 2011 the year of the event. There was no shortage of discussions about services in the 2000’s, let’s have the same type of focus and advances in event’s in the 2010’s.

How many of your systems are designed to issue event notifications to other systems when information is updated? In my own personal experience, this is not a common pattern. Instead, what I more frequently see is systems that always query a data source (even though it may be an expensive operation) because a change may have occurred, even though 99% of the time, the data hasn’t. Rather than optimizing the system to perform as well as possible for the majority of the requests by caching the information to optimize retrieval, the systems are designed to avoid showing stale data, which can have a significant performance impact when going back to the source(s) is an expensive operation.

With so much focus on web-based systems, many have settled into a request/response type of thinking, and haven’t embraced the nearly real-time world. I call it nearly real-time, because truly real-time is really an edge case. Yes, there are situations where real-time is really needed, but for most things, nearly real-time is good enough. In the request/response world, our thinking tends to be omni-directional. I need data from you, so I ask you for it, and you send me a response. If I don’t initiate the conversation, I hear nothing from you.

This thinking needs to broaden to where a dependency means that information exchanges are initiated in both directions. When the data is updated, an event is published, and dependent systems can choose to perform actions. In this model, a dependent system could keep an optimized copy of the information it needs, and create update processes based upon the receipt of the event. This could save lots of unnecessary communication and improve the performance of the systems.

This isn’t anything new. Scalable business systems in the pre-web days leveraged asynchronous communication extensively. User interface frameworks leveraged event-based communication extensively. It should be commonplace by now to look at a solution and inquire about the services it exposes and uses, but is it commonplace to ask about the events it creates or needs?

Unfortunately, there is still a big hurdle. There is no standard channel for publishing and receiving events. We have enterprise messaging systems, but access to those systems isn’t normally a part of the standard framework for an application. We need something incredibly simple, using tools that are readily available in big enterprise platforms as well as emerging development languages. Why can’t a system simply “follow” another system and tap into the event stream looking for appropriately tagged messages? Yes, there are delivery concerns in many situations, but don’t let a need for guaranteed delivery so overburden the ability to get on the bus that designers just forsake an event-based model completely. I’d much rather see a solution embrace events and do something different like using a Twitter-like system (or even Twitter itself, complete with its availability challenges) for event broadcast and reception, than to continue down the path of unnecessary queries back to a master and nightly jobs that push data around. Let’s make 2011 the year that kick-started the event based movement in our solutions.